Asked By : sai kiran grandhi

Answered By : Realz Slaw

- Memory is usually retrieved in large blocks from RAM.

- Some processing units will try to predict future memory accesses and cache ahead, while yet processing older parts of memory.

- Memory is cached in a hierarchy of successively larger-but-slower caches.

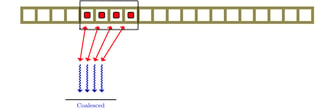

Therefore, making programs that can use predictable memory patterns is important. It is even more important with a threaded program, so that the memory requests do not jump all over; otherwise the processing unit will be waiting for memory requests to be fulfilled. Diagrams inspired by Introduction to Parallel Programming: Lesson 2 GPU Hardware and Parallel Communication Patterns: Below: Four threads, with uniform memory access. The black dashed rectangle represents a single 4-word memory request.  The memory accesses are close, and can be retrieved in one go/block (or the least number of requests). However, if we increase the “stride” of the access between the threads, it will require many more memory accesses. Below: four more threads, with a stride of two.

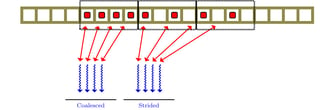

The memory accesses are close, and can be retrieved in one go/block (or the least number of requests). However, if we increase the “stride” of the access between the threads, it will require many more memory accesses. Below: four more threads, with a stride of two.  Here you can see that these 4 threads require 2 memory block requests. The smaller the stride the better. The wider the stride, the more requests are potentially required. Of course, worse than a large memory stride is a random memory access pattern. These will be nearly impossible to pipeline, cache or predict.

Here you can see that these 4 threads require 2 memory block requests. The smaller the stride the better. The wider the stride, the more requests are potentially required. Of course, worse than a large memory stride is a random memory access pattern. These will be nearly impossible to pipeline, cache or predict.

TikZ sources:

Best Answer from StackOverflow

Question Source : http://cs.stackexchange.com/questions/18229